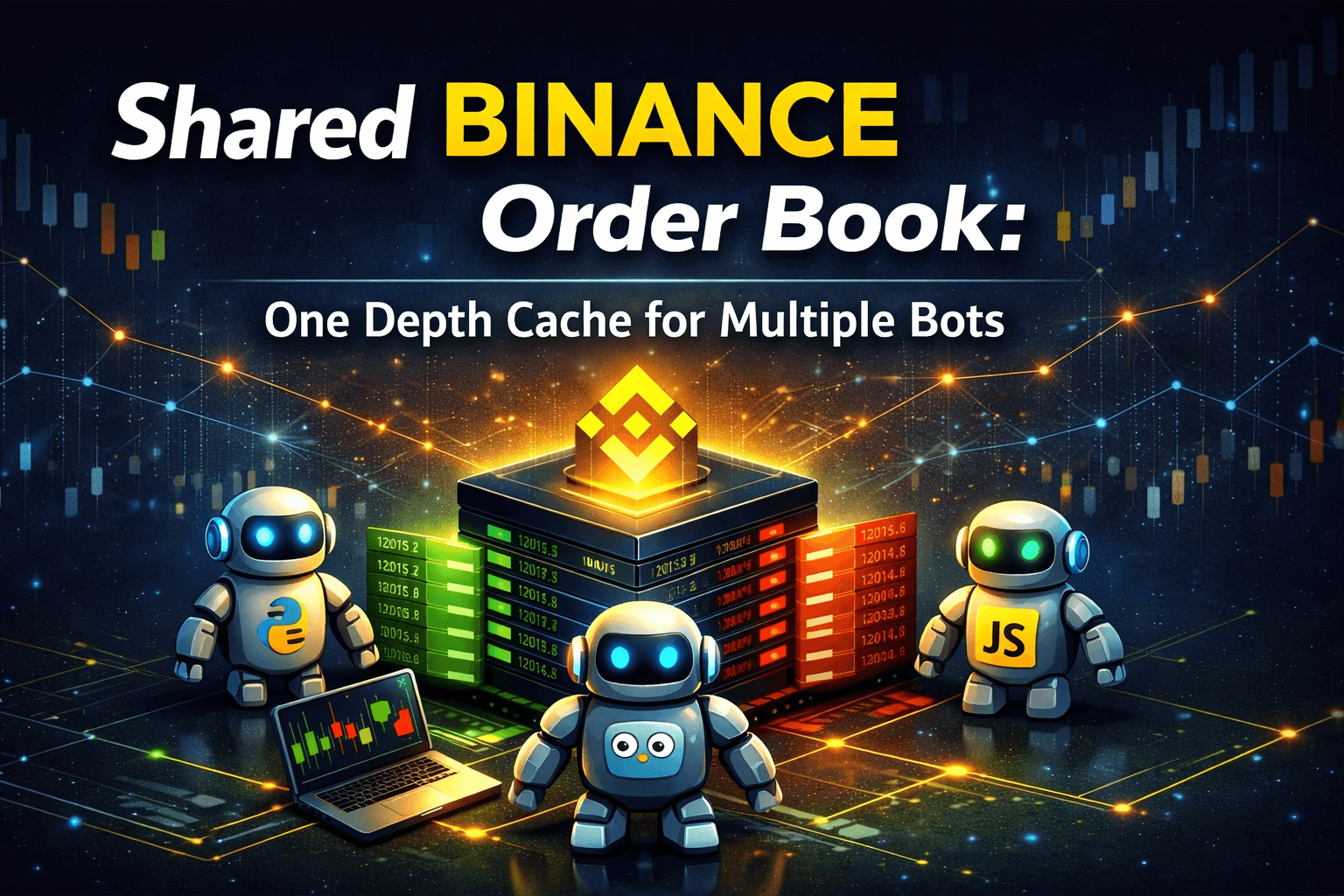

Why Your Binance Order Book Should Not Live Inside Your Bot

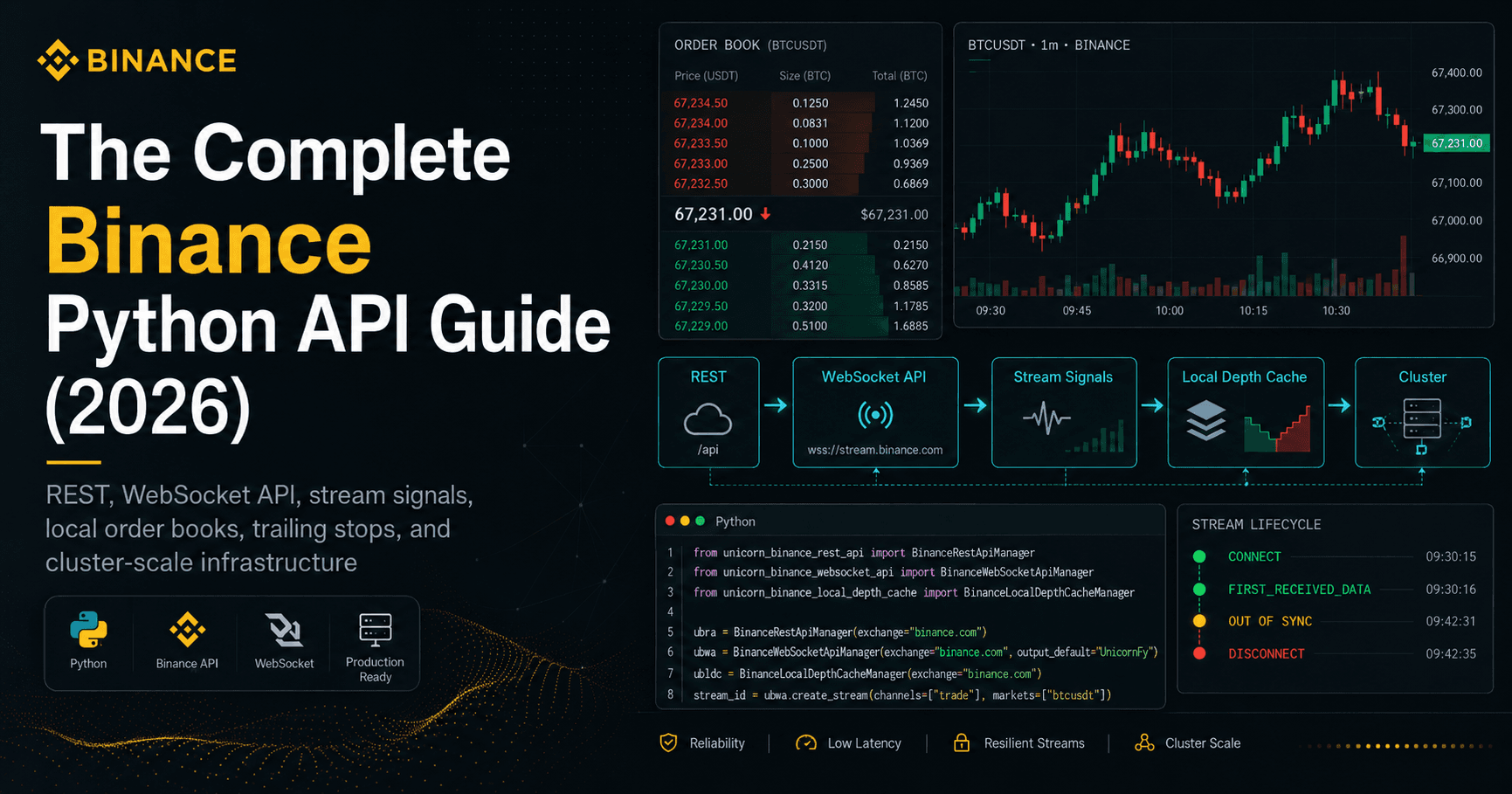

How I turned a per-process depth cache into shared infrastructure for Python, Node.js, Go, Rust, and anything that can speak HTTP.

Most Binance bots start the same way.

One process opens a WebSocket, pulls a depth snapshot, keeps a local order book in memory, and runs a strategy loop on top. For one bot, on one machine, this is fine. Sometimes it is even elegant.

Then the setup grows.

A second bot appears. A dashboard wants the same data. A sidecar service needs top-of-book prices. A teammate working in JavaScript wants access too. Reconnects pile up. Snapshot requests start burning rate limit budget. And if the one process holding a market dies, your market view dies with it.

Suddenly the order book living inside one bot process is no longer a neat design choice. It is now the bottleneck.

That is the problem this post is about.

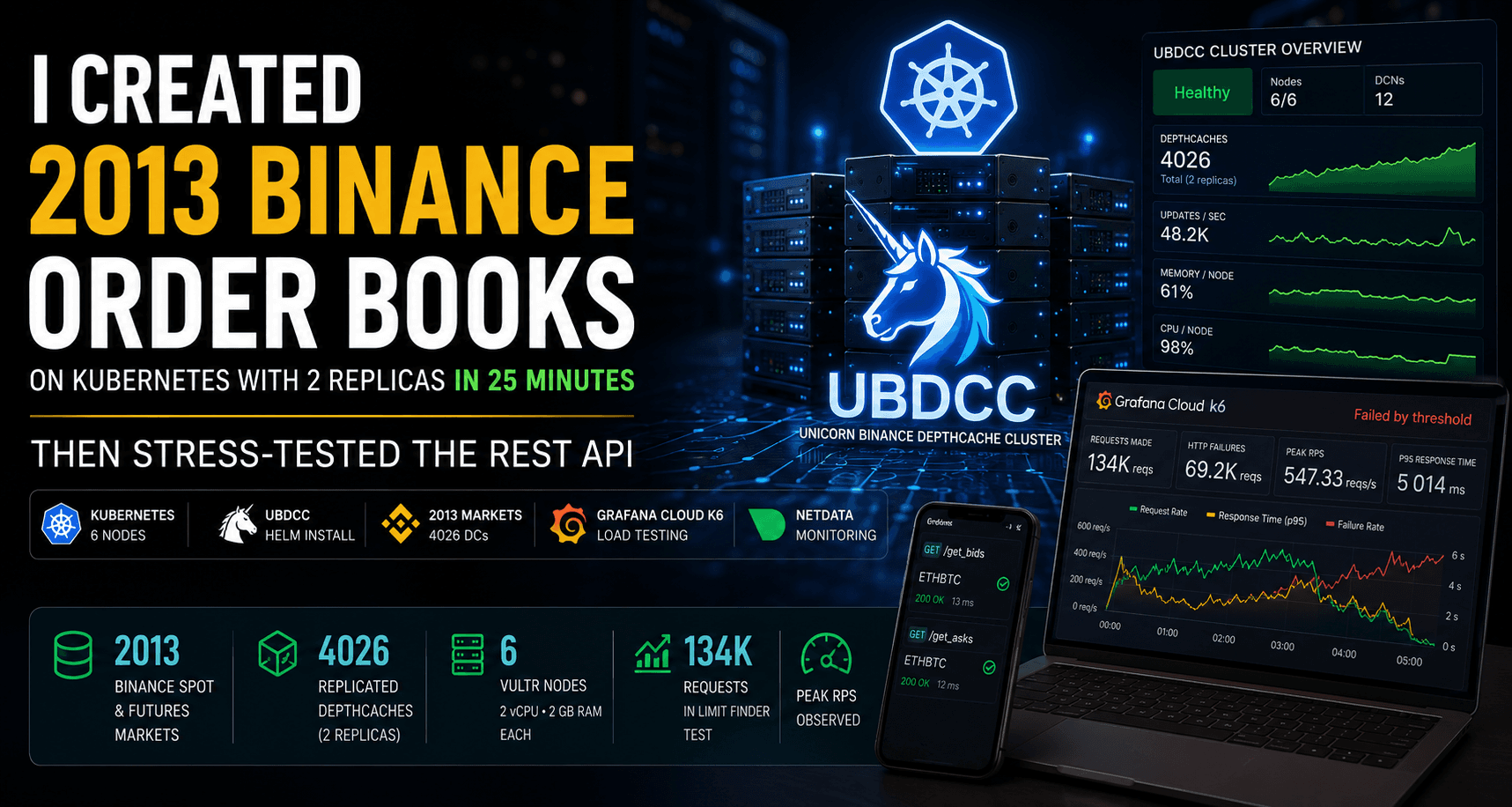

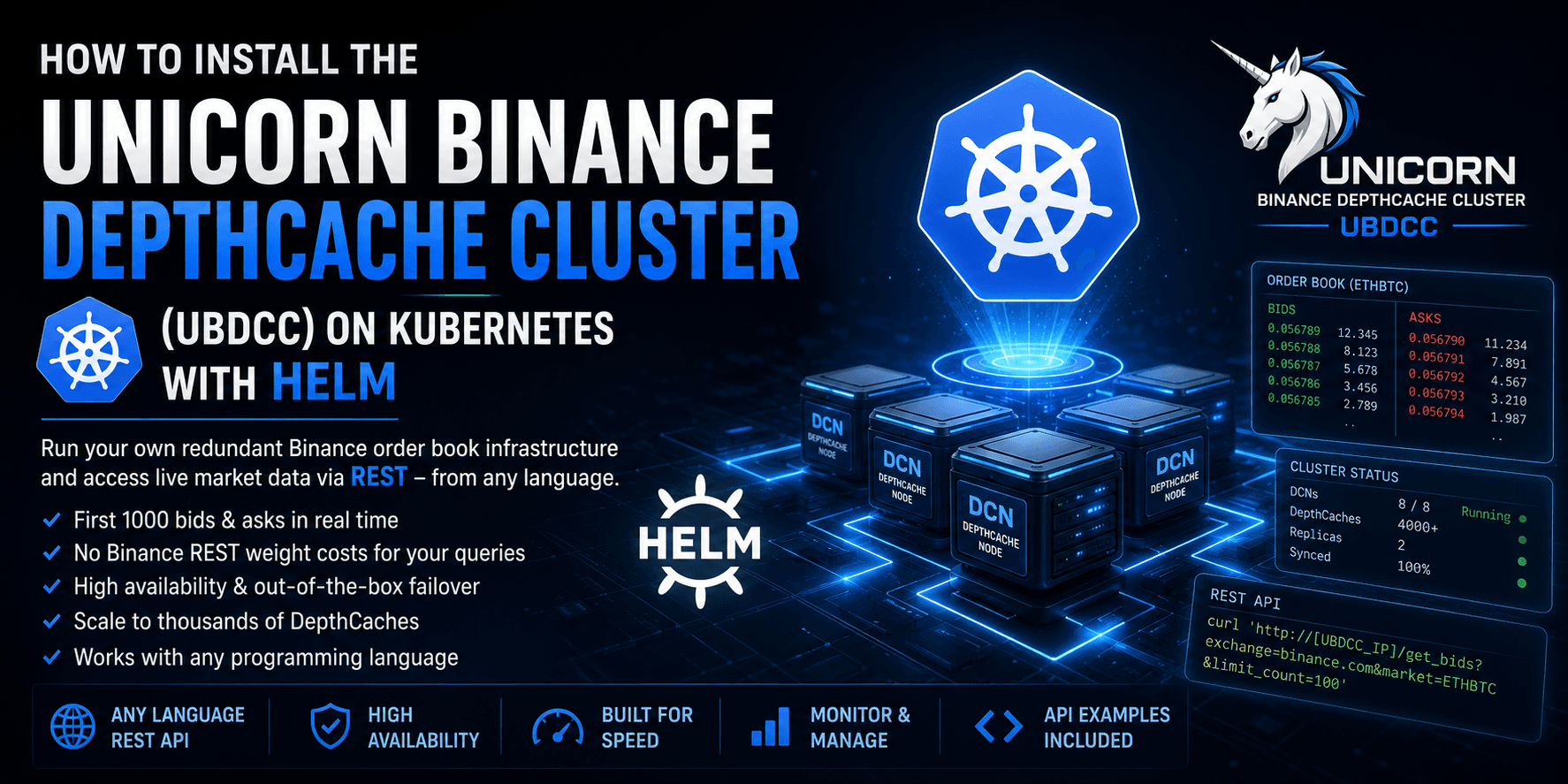

And that is why I built UBDCC — the UNICORN Binance DepthCache Cluster.

The core problem

A local depth cache inside one process is easy to start with, but hard to share, hard to scale, and fragile.

That creates four common failure modes.

1. One bot becomes two

The first strategy works, so you launch a second one.

Now both bots maintain their own WebSocket connections, their own snapshots, and their own copies of the same order books in separate memory spaces. You doubled the moving parts without gaining new market data. After a reconnect or short network disturbance, those two processes can temporarily hold slightly different views of the same market.

That is not great when both are making decisions off the same symbol.

2. Other services need the same data

Serious setups rarely stop at one bot.

Soon there is:

a dashboard

an alerting service

a sanity-check script

a backtester consuming live spreads

some quick internal tool that “just needs the top 5 asks”

If the depth cache only exists inside a Python process, every new consumer either needs its own cache or some custom integration. Both options are ugly.

And if one of those consumers is written in Node.js, Go, Rust, or Bash, it gets uglier fast.

3. Rate limits become infrastructure limits

Every initial depth snapshot costs request weight.

If every process builds its own local cache, every process burns its own weight budget. Reconnect storms multiply the cost. Large market sets become slow to initialize. On one IP, the wall arrives quickly.

For strategies like triangular arbitrage, where a bot may depend on dozens or even hundreds of depth caches, restarting the strategy during development because of one changed line of code can mean waiting all over again for the entire in-process market view to rebuild and re-sync.

That is a lot of wasted time for something that has nothing to do with strategy logic.

4. One cache becomes a single point of failure

A local depth cache inside one bot process is not just hard to share. It is also fragile.

If that process dies, your market view for that symbol dies with it. The usual workaround is not real high availability — it is just duplication by coincidence, with different processes maintaining different copies of the same book.

In a serious setup, market data should not depend on one process staying alive. It should be a shared service with explicit redundancy and failover.

So the real problem is not “how do I keep one order book in sync?”

The real problem is:

How do I turn order book data into shared infrastructure instead of per-process state?

The architectural answer

The clean answer is simple:

one shared depth-cache layer, many consumers

Instead of every bot owning its own WebSocket connection and local order book, you run one service that manages order books centrally and exposes them over HTTP.

That is what UBDCC does.

UBDCC turns Binance order books from per-process state into shared infrastructure.

Anything that can speak HTTP can consume the data:

Python

Node.js

Go

shell scripts with

curldashboards

monitoring

internal tools

That is the key shift.

Not “another library”. Not “another bot framework”.

A shared order book layer.

What UBDCC is

UBDCC is an MIT-licensed cluster service for shared Binance depth caches.

It manages:

WebSocket connections

depth snapshot initialization

synchronized local order books

distribution of caches across worker processes

access through a REST API

So instead of this:

bot A keeps BTCUSDT in memory

bot B keeps BTCUSDT in memory

dashboard keeps BTCUSDT in memory

…you get this:

UBDCC keeps BTCUSDT in memory

bot A queries it

bot B queries it

dashboard queries it

One source of truth. Multiple consumers.

The cluster model

UBDCC consists of three roles:

mgmt — coordinates cluster state and assigns work

restapi — the public HTTP interface clients talk to

dcn (DepthCache Node) — worker processes that run the actual depth caches via UBLDC

Each DCN is one Python process, which maps nicely to one CPU core. On a single 8-core machine, you can run one management instance, one REST API, and multiple DCNs handling hundreds of depth caches.

On Kubernetes, the same pattern scales horizontally.

The important point is not the exact layout.

The important point is that your strategies no longer own the order books.

What this solves in practice

Shared data across multiple bots

Two or ten strategies can consume the same books without duplicating Binance connections or cache state.

You pay one network hop to the REST API and remove a lot of duplicated infrastructure.

Built-in redundancy and failover

A DepthCache does not have to exist only once.

With desired_quantity=2, UBDCC keeps two replicas of the same market on different DCN nodes. If one node dies, the REST API can route the request to the surviving replica. That gives you real high availability for market data instead of hoping that one local in-process cache stays alive.

This is one of the biggest differences compared to the usual “every bot keeps its own order book” setup. In the usual model, redundancy is accidental and inconsistent. In UBDCC, redundancy is explicit and controlled.

Language-agnostic access

A frontend or support tool does not need to understand Binance stream semantics, sequence handling, reconnect logic, or Python internals.

It makes an HTTP request and gets JSON back.

That is a huge simplification.

Better scaling under rate limits

UBDCC can distribute workload across multiple machines and therefore across multiple public IPs.

That spreads snapshot initialization and reconnect load. Since version 0.4.0, it can also assign optional Binance API credentials across nodes to benefit from higher authenticated rate limits where applicable.

No credentials are required for public endpoints. The authenticated path is optional.

What it looks like to use

Install:

pip install ubdcc

Start a local cluster with four DepthCache nodes:

ubdcc start --dcn 4

Create some shared depth caches:

curl -X POST 'http://127.0.0.1:42081/create_depthcaches' \

-H 'Content-Type: application/json' \

-d '{"exchange": "binance.com", "markets": ["BTCUSDT", "ETHUSDT"], "desired_quantity": 2}'

Query the top 5 asks:

curl 'http://127.0.0.1:42081/get_asks?exchange=binance.com&market=BTCUSDT&limit_count=5'

That is the whole idea: create once, consume anywhere.

For a full practical walkthrough with the dashboard, replicas, failover test, REST calls, and generated client snippets, see: From pip install to a Redundant Binance Order Book Cluster — UBDCC + Dashboard Quickstart

Python users are not locked out

If you already use UBLDC for local order books, the cluster interface is built in.

You can point BinanceLocalDepthCacheManager at UBDCC and keep using familiar sync or async methods:

from unicorn_binance_local_depth_cache import BinanceLocalDepthCacheManager, DepthCacheClusterNotReachableError

import asyncio

async def main():

await ubldc.cluster.create_depthcaches_async(

exchange="binance.com",

markets=["BTCUSDT", "ETHUSDT"],

desired_quantity=2,

)

while not ubldc.is_stop_request():

print(await ubldc.cluster.get_asks_async(

exchange="binance.com",

market="BTCUSDT",

limit_count=5

))

try:

with BinanceLocalDepthCacheManager(

exchange="binance.com",

ubdcc_address="127.0.0.1",

ubdcc_port=42081

) as ubldc:

asyncio.run(main())

except DepthCacheClusterNotReachableError as exc:

print(f"ERROR: {exc}")

So UBDCC is not an alternative to Python-native workflows.

It is the shared infrastructure layer underneath them.

Why I trust it

There are four parts that matter to me.

It does not silently serve stale books

UBDCC is built on UBLDC, which validates Binance sequence numbers, re-syncs on gaps, and handles orphaned levels correctly instead of leaving ghost entries in the book.

A shared DepthCache is only useful if consumers also know its state. UBDCC does not just hold the cache — it knows whether that cache is actually in sync, re-synchronizing, or temporarily not safe to use. That is exactly the information a serious strategy needs before trusting market data.

If a book is re-syncing, that state is explicit.

That matters more than most people think.

I wrote a separate deep dive on the orphaned-level problem here: Your Binance Order Book Is Wrong — Here's Why

That post explains why a Binance-compatible local order book can look correct while silently accumulating stale price levels over time.

Failover is transparent

Depth caches can run redundantly across nodes. In practice that means you can keep the same cache twice and survive the loss of one DCN without losing the market view for that symbol.

If one node fails, requests are routed to another replica. But the failover is not hidden. Responses can report that a failover happened and which pod failed before the request succeeded.

That is how infrastructure should behave: resilient, but observable.

The cluster manages its own state

Cluster state is replicated internally across nodes.

If the management process dies, it can recover from the most recent surviving state. No external Redis or etcd dependency is required just to keep the cluster alive.

It is fast enough to be infrastructure

The stack is async end-to-end, and the core packages are Cython-compiled. In practice, this means low latency and enough throughput to make “shared order book as a service” actually usable instead of theoretically neat.

What UBDCC is not

It is not:

a strategy engine

a backtesting platform

an execution engine

a “make money with crypto” framework

It serves live order book data.

That is its job.

Who should look at it

A solo developer running multiple bots

You want one shared market-data layer instead of duplicate depth caches all over the machine.

A team with several services consuming the same books

You want bots, dashboards, alerts, and internal tools reading from one consistent source.

A larger setup running into rate-limit and scaling pain

You want to spread load across nodes, IPs, and optional authenticated request budgets.

If none of this sounds familiar, you probably do not need UBDCC yet.

But when the question becomes:

“Can my second bot read the same order book without rebuilding everything?”

Then you are already in UBDCC territory.

Why I think this matters

There are many examples online showing how to build a Binance bot.

There is far less material about what happens when one bot becomes several consumers, several services, several machines, and real infrastructure concerns.

That gap is exactly why I built this.

Not because the architecture is magical.

Because at some point it becomes the obvious architecture — and building it yourself is annoying, time-consuming, and easy to get wrong.

Try it

If your order books are trapped inside individual bot processes, UBDCC is the escape hatch.

UBDCC is MIT-licensed and part of the UNICORN Binance Suite.

I hope you found this informative and useful.

Follow me on Binance Square, GitHub, X, and LinkedIn, or join Telegram for updates on my latest publications. Constructive feedback is always appreciated.

Thank you for reading, and happy coding! ¯\_(ツ)_/¯